This is adapted from a speech made last Thursday at the Future of Journalism and Our Democracy event hosted by the Centre for NZ Progress and the NZ Fabian Society. Significant changes have been made from Thursday's version a) in response to comments, b) because this is a different audience and medium, and c) because I had time on the flight home and I felt like it.

--

After the election last year, I wrote this post on why the media failed over Dirty Politics. Not just because Key won the election, but also because Katherine Rich is still sitting on the Health Promotion Authority, because Jason Ede got swept under the carpet, and because Judith Collins didn't actually face any consequences for any of the things she did in Dirty Politics – she just got fired accidentally, over something which wasn't even in Dirty Politics.

The response I got was really interesting. Journalists said that it's not their job to change governments or influence politics, they're just there to report the facts. And it's a really compelling argument: After all, if they're trying to change governments, we'd accuse them of political bias, and lose trust their reporting. What this means in practice is that their job is to report the facts, but the outcome is up to the voters.

But there's a problem with this logic. Put it this way, let's say a hundred people are put on trial and none of them are convicted. Were the trials fair? Maybe. The answer is actually “we don't know”. We don't know if any of those people deserve to be convicted. But what we do have there is an idea of what “deserve to be convicted” means. It's an ideal of justice which relates to the outcome, and it allows us to question the processes.

If we assume that simply “reporting the facts” is living up to the ideals of the Fourth Estate, regardless of the outcome, we're conflating the processes with the ideals. This would leave us with no way of challenging the processes, or ask how the process ought to evolve to better serve those ideals.

What are those ideals? There's a role for journalism to provide information, to bear witness to human stories, to act as the first draft of history, but when we talk about the Fourth Estate, we're talking about a very specific aspect of journalism.

When we use terms like “speaking truth to power”, we don't mean speaking any old truths to nobody in particular. It means forcing the powerful to acknowledge uncomfortable truths and holding them to account. It's more than just saying it and walking away.

When we say the Fourth Estate is “vital to democracy”, we mean that it has a vital role to play in maintaining the checks and balances of power in a democratic state. That's not a role for a passive observer. Power needs to be exercised to keep another power in check, and the very act of keeping another power in check is an exercise of power.

These are the goals of the Fourth Estate, and the ideals it ought to be measured against.

When truth is spoken to power and the powerful evade, lie, and shrug with a “I'm pretty relaxed about that”; when we know vindictive ministers, industry lobbyists and finance company directors run black ops campaigns for fun and profit; when we know these things, it's not enough to ask whether it was fairly reported.

We need to ask, was truth spoken to power in a meaningful sense? Were they held accountable? Did the Fourth Estate act as a check against abuses of power?

And if these things didn't occur, but the reporting was fair, what does that say about the process of “fair reporting”?

The idea that objective reporting isn't objective at all is very well-trodden. Basically, nobody is in the business of only reporting facts. The process of selecting which facts to include and which to exclude, of deciding how to organise and present them, these are inherently subjective processes. We call them “stories” because they're not just a collection of facts, but a meaningful interpretation of facts.

I want to focus on the kind of interpretations made by many political journalists – not all, but many – who editorialise: Instead of saying “they've done a bad thing” and explaining why, they say it's “a bad look”. Every action is interpreted in terms of its political repercussions.

Sometimes they do it because they know the story is bullshit. It's meaningless political theatre and the only way to justify why it's newsworthy is to say “it's a bad look”. Sometimes it's the opposite. They do it because they feel strongly about a story and don't want to open themselves up to accusations of bias.

But this is precisely what makes their judgement so important to the story. We all feel that uneasy about political actors planting stories against each other in the media, and the role that the media play in facilitating their machinations. But whether running those stories is legitimate comes down to a journalist's judgement of why it ought to matter to the public.

It's not enough for journalists to assure us in the abstract that they make these judgements when Cameron Slater comes to them with a tip. Their rationale for why stories should matter to the public need to become a part of the output of journalism as well, both as a form of accountablity, and as a narrative device.

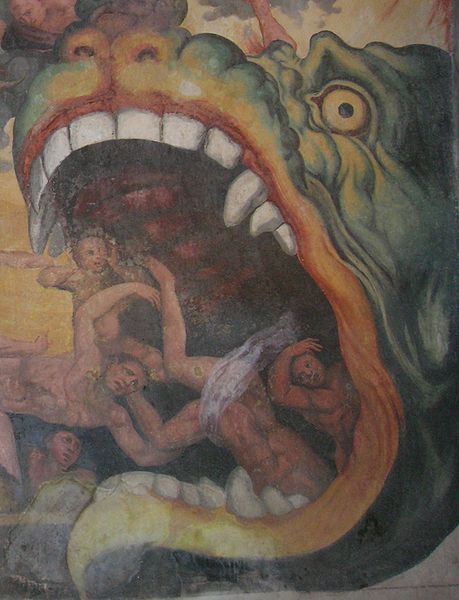

Saying “it's a bad look” cannot replace that. Who exactly is doing the looking? The Eye of Sauron? They're trying to fill a judgement-sized hole without actually making a judgement. Instead of speaking as a human being, they speak on behalf of the floating eye of a faceless voter. What does this faceless voter want?

The same thing any of us would want if we had our values, our interests, our faculty for moral judgement scooped out of our brains: We would want the the best politician. That apparently means “good political management”, it means politicians who have “momentum”, the one capable of “a good look”, the one capable of making their opponents have “a bad look”.

These are all words which describe the kind of media coverage a politician is getting. In other words, our political editorials describe politicians in terms of the coverage they're getting in political editorials. It's just a self-referential circle-jerk.

The other thing that they use as a replacement for their own judgement are proxies. They quote anyone who's willing to answer the phone and say “yeah that's a bad thing”, regardless of what the question is. There's the issue of false balance, which again is well-trodden territory. But I want to focus on the systemic weakness that it creates.

By refusing to put their own judgements as human beings into a story, they create a narrative vacuum, and then they fill that vacuum with people like Jordan Williams. There's an entire industry of people like him who set themselves up to fill that vacuum, so they can control the narrative for their own private gain, or for the private gain of the people they serve. And they're invited to do so by journalists.

Journalistic integrity is supposed to be about fairness and honesty. But here's the ironic thing: In their effort to demonstrate their fairness and honesty, they've decided to stop exercising their own narrative power, and to hand it over to everyone but themselves. To politicians, to pundits, to lobbyists, to straight up sociopaths. To people who have no loyalty to fairness or honesty.

But the even greater problem is that journalism doesn't think that it is its job to fix this problem. I think the cause is that they think of their role as carrying out the process of journalism, rather than serving its principles. But being wedded to those processes means that the tactics that Big Tobacco were using fifty goddamn years ago still work today. It means that we can see and know and understand what the spin industry does, but we still can't develop an immunity to it.

If journalism was about telling self-evident truths – i.e. Reporting the facts – trust wouldn't be need. But trust is needed because truths are often difficult, or complex, or contested, and these truths are told through the narrative. If the Fourth Estate is to speak truth to power, it needs to wrestle that narrative control back from the people who want to exploit it.

And if it doesn't, it ought to stop claiming to be the Fourth Estate.