Speaker: What we think and how we vote

45 Responses

First ←Older Page 1 2 Newer→ Last

-

So National party voters are more likely to launch spittle flecked ideolological tirades than Labour ones? You only have to read David Farrar or listen to Mike Hoskings to know one side is still fully engaged in the class war.

-

David Hood, in reply to

So National party voters are more likely

Well, not really. There are a pretty big pool of National voters who, from these questions could be ideological or issues voters- we just don't know.

We do know there are a bunch of National voters who see themselves as a bit rightwing and National as more rightwing than they are. And Labour voters see themselves as closer to the centre than National voters.

You could argue the voters see the National Party (being more extreme) as more likely to engage in tirades than the Labour Party

-

Interesting stuff, David. Wish I had the time to go through my own findings on the last survey to compare and contrast. Are the questions mostly similar?

It was an interesting process last time. I think I found out a lot more about statistics than I did about NZ voters' opinions, though. I'd been hoping to find more interesting dimensions than left vs right, but of course the first PC axis accounted for most of variance that could be accounted for in the data (which it's designed to do), and that seemed to divide the issues along fairly predictable left-right lines. The second axis accounted for very little and didn't have much by way of recognizable pattern.

But then I realized that this was probably mostly because of the design of the questionnaire, which was massively full of left-right type questions, and had very few questions about, for example, environmentalism. Those that did exist were strongly correlated to votes for Greens, so I was left realizing that so much of what I was looking for was down to what questions get asked.

I'll be very interested to see what else you find in this survey. The finding that there is a left-right ordering of our parties seems to be unchanged. National is still at the far right of the parties with meaningful numbers. What else are you thinking of looking at?

-

David Hood, in reply to

Ben, you might get a sense I have my doubts that the Left-Right umbrella terms have any more use than "I am voting National so I must be on the right but I feel like they are a bit more extreme than me" rather than as a determinant. It is just, as you said there are a lot of questions that place things in that context.

Similar survey, with some potential of linking results over time (he said nodding towards the future) a mix of general political questions, demographics, and questions about issues around election time.

-

Very interesting indeed, David! Thank you for all your hard work in investigating this for us.

-

Ian Dalziel, in reply to

tirade unionists...

spittle flecked

Ahh, welcome to the sleepy little hamlet of Spittlefleck, where village idiots outnumber the rest. More colic than bucolic, and the smouldering resentments are abiding proof that it takes a 'child' to raze a village

-

BenWilson, in reply to

It's kind of interesting that if you ask the right questions, then the left to right scale does seem to form the most important principal component axis, and the parties do seem to order themselves according to the self-rated left/rightness of the respondents. I don't know if that shows more than a vague consensus on what these terms actually mean, though.

I wasn't able to model that much of an improvement in accuracy based on political opinions than the null model (which is that everyone votes National). That would be 42% right, and the best prediction model using random forests and taking hundreds of opinions into account got that up to 57% (crossvalidated). So maybe a 15% improvement in ability to guess how someone votes was attained off the answers to 74 questions.

Not sure what to make of that. Either the wrong questions were being asked (obviously I eliminated questions like "which party did you vote for" and "which party are you most sympathetic to"), or political opinion (divorced from specific knowledge of their opinion on particular parties) accounts for very little of the way people actually vote. Or a bit of both.

There was me hoping to build a 10 question tree that could ascertain who you were going to vote for without ever mentioning a party name (or a proxy for it, like "the government"). But no.

It's not like this is the terrible fault of people not knowing what they want. I think it's much more of a factor of the parties being broad churches that very significantly claim to offer much the same things. The weak signal is not in what people want, it's in which party will give that to them. But this is speculation on my part. Can't prove any of that.

-

linger, in reply to

Part of the difficulty in attempting to build a general model for voting choices may be that often the same label (e.g. “business-friendly”) will have different meanings and evaluations to voters for different parties.

e.g. National basically owns the label “business-friendly”. However hard Labour tries to market itself on that basis too, it doesn’t work for them: even though both National and Labour voters may be generally in favour of government supporting businesses, National voters believe National is more business-friendly than Labour, and only existing Labour voters believe Labour is actually business-friendly. (Meanwhile, Green voters may interpret “business-friendliness” in rather different terms, either evaluating it with much lower priority – if not actually negatively – or else equating it with, say, promoting efficiency and sustainability in business.)

Result in this case: there are probably strong entrenched differences in opinion, but a question about, say, “importance of looking after businesses” probably won’t identify any strong connection to voting patterns, except through evaluations of named parties. -

linger, in reply to

Probably questions measuring priorities placed on different issues would be more successful predictors of voting preference than questions measuring reactions to single issues?

-

BenWilson, in reply to

Yes, the NZES was not built for the purpose I was using it, so it's not that surprising it wasn't that fit for it. Still a very interesting resource though, there's nothing else like it in NZ.

-

BenWilson, in reply to

I'd say prioritizing is a big part of what we do when making decisions. I'd go further, though, to say that most people probably essentialize their thinking, homing in on a small group of issues that they think matter. But these are different for everyone, and even if the same, who they believe will sort out these issues can be very different. It's also very hard to tell whether someone claims a particular issue is the most important thing, but is actually making their decision based on factors they can't admit - possibly not even to themselves. I'd say racist factors are like this.

In short, prediction of humans is hard! Even if they actually tell you who they would vote for, that's not 100% accurate.

That was what was really interesting to me, that despite a far richer set of questions, the ability to guess who they would vote for wasn't a great deal better than just asking them if they are left or right wing.

I never looked into the influence of demographic factors. It's an interesting question. I bet it's highly collinear in many instances to opinion, so the predictive effects would not stack. But I'm guessing.

-

There are both a series of questions rating the importance of a standard issues from the last election, and a free text "what is the most important issue to you" kind of question where people could write whatever they feel (which has also been coded into general and specific subject categories).

Because I'm curious, this evening I'll take the free text and figure out the commonest words written by party voted for.

-

I haven't done any stemming (removing suffixes to bring terms together), but here are the commonest 10 terms written by NZES in the most serious issue free text question (removing common stop words like a, the, not, etc) for words that come up at least three times, assuming there are 10 terms that crop up at least 10 times. As I did nothing about sorting out ties, the tail end of each list may be a bit arbitrary as they could be tied with things that made the number more than 10.

Conservative: economy, government, know, housing, issues, land, no, cost, education, employment

Green: poverty, environment, child, education, rich, between, poor, inequality, gap, economy

Internet_Mana_Party: poverty, housing, child, no, children, feeding, schools

Labour: poverty, housing, health, nz, education, between, rich, child, assets, gap

Māori_Party: economy, health, poverty, employment, selling, education, economic, nz, our, education

National: economy, economic, stability, nz, housing, poverty, stable, dirty, education, politics

NZ_First: selling, economy, nz, housing, people, land, employment, overseas, tax, poverty

Didn’t Vote: poverty, economy, nz, dirty, politics, housing, health, jobs, know, child

ACT and United Future had insufficient representation to have any words occur 3 times or more.

-

BenWilson, in reply to

There are both a series of questions rating the importance of a standard issues from the last election

Yes, although rating and ranking are not the same thing. Not that either is clearly superior, but I do think that ranking is a pretty normal mental process when faced with massive numbers of issues. I don't know how many people genuinely make decisions by aggregating hundreds of ratings of, say, parties performance on policies, and then impartially looking at the highest score and choosing the party that matches. I'd say that they mostly choose first and then crystallize their decision down to what small subset of questions matter most to them and claim the decision boils down to that. Which it might even have done, at a subconscious level. Maybe there was some wavering over the critical issue, if more than one of the parties credibly contested it in their minds.

, and a free text “what is the most important issue to you” kind of question where people could write whatever they feel (which has also been coded into general and specific subject categories).

Which is very important. But suffers from having so many possibilities and many interpretations, such that it might be hard to get robust numbers except for the large parties and most common platitudes.

-

BenWilson, in reply to

Love it. "Dirty", a reason to vote National, or not at all!

-

I should really link up Dirty Politics and Don't Know to be single terms- don't was on my list of excluded terms, hence Conservatives were in the know, rather than don't know

-

I did a better way of looking at it: rather than absolute amounts this is how often voter groups are (+) or are not(-) using terms relative to other voter groups use of those terms. The more terms beside each party, the more that part had unusual terms frequencies (I took the most unusual 100 and aggregated them by party). For each party the terms are in decreasing order of unusualness

Conservative:-poverty, +economy, -health, +government, +land, -child, -jobs, +justice, +moral, +leaders, +law, +management, +don't_know, +financial, +cost, +issues, -economic

Green:+poverty, +environment, -economy, +rich, +between, +inequality, +child, +poor, +gap, -selling, +climate, +change, -employment, -health

Internet_Mana_Party:-economy, +schools, +feeding, +wages, +children, +poverty, +sales, +marijuana, +support, +tppa, +assets, +low, -education, +issue, +important, +income, +child, -health, -land, -dirty_politics, +housing

Labour:-economy, +poverty, +between, +rich, +health, +poor, +gap, +inequality, +wage, +assets

Māori_Party:+health, +education, +economy, +employment, +selling, +whanau, +economic, +settlements, +economics, -issues, +growing, +leadership, +cost, +poverty, -rich, +lack, -government, +families

National:+economy, -poverty, +stability, +economic, -employment, -selling, +stable, +keeping, -poor, -assets

Did_Not_Vote:-economy, -poverty, +follow

NZ_First:+selling, -poverty, +people, +immigration, -economy, +overseas, +land

Focusing on things common to all groups, there is a pretty clear division, that comes out of the data between:

(poverty discussed, economy not discussed) parties, and (economy discussed, poverty not discussed) partiesWith the two parties that thought of themselves in the middle (NZ First) and the Māori Party forming their own opposing

(poverty discussed, economy discussed) Maori party and

(economy not discussed, poverty not discussed) NZ First and Did Not VoteMany of the parties can then be seen to have their specialist themes by their supporters, as well as common ground.

-

So the + or - is whether the term occurs more or less proportionately per group than the mean proportion across all groups? So if "economy" occurs in, say, 50% of responses, but in 51% of National voter responses, then it's a +? Just trying to get my head around what you're doing there.

-

David Hood, in reply to

Following the term economy through:

I get the number of mentions of every term for every party. This gives the raw number of mentions of every term, for all of the parties tested.

Then I generate the percentages for each term for each party, dividing the raw number by the total number of terms for each party (which normalises for the number of terms). Effectively getting the proportion of each term among the parties terms.

Then I calculate the mean proportion for each term (this is not usage of people, this is the mean of occurrences between party voter groups).

Then I subtract the mean from each term for each party, to get how far from "typical" each parties frequency of the term is.

Then I rank all the terms in order from most atypical to least atypical (closest to the mean) and take the 100 most atypical.

Then I reorganise them into a list for each party.

Because economy is a very polarised term (parties voters either used it a lot or not at all), there was no party that was near the mean in its usage, so it showed up in all the parties. Similarly poverty, but the usage was in the opposite direction. + for unusually high usage, - for unusually low usage. So while you are correct in the sign, it is based on being the largest differences among all terms in the data.

Then we've got terms like moral for Conservatives or environment for Greens where the term was used enough to put it in the overall 100 most extreme terms, but other parties usage of the terms was closer to the mean so did not make the shortlist for anyone else.

I've put the R code up that I used if it helps- but it wasn't written for an audience, it was just something I whipped up quickly and may be a bit dense in places.

-

OK, I think I get that. So you would get a -economy for a group if, for example, economy was not one of the terms mentioned at all for that group, putting it well below the mean?

It’s idiosyncratic but I think I get what you’re trying to do. Kind of like an ANOVA, looking for significant differences (in spirit, obviously, not in the detail)?

-

David Hood, in reply to

So you would get a -economy for a group if, for example, economy was not one of the terms mentioned at all for that group, putting it well below the mean?

Correct, assuming that the mean was not just above zero (one group using it and all the rest not doing so would be a case like moral for the Conservatives, where the mean is near zero so it shows up for no-one else).

You could look at it as inspired by ANOVA- I would probably view it as a cross between a text analysis term document matrix and outlier detection, but I will (idiosyncratically as you say) draw on what musical instruments inspire when writing a data analysis song.

-

linger, in reply to

What came to mind for me is Yule’s Q = (a – b) / (a + b) which has been used to compare relative word frequency (i.e., word counts normalised for text length) in two language samples (with respective relative frequencies a, b per million words). What you’re doing seems to be an extension of that approach to multiple samples.

-

So that is the change from a to b as a proportion of the combined proportions? Similar I suspect, but I used an aggregate figure for b to given a common baseline to compare multiple entries, and didn't divide. I imagine the not dividing has the effect that big difference between big proportions matter more than little differences between little proportions, but I am comfortable with that in the story this data is telling.

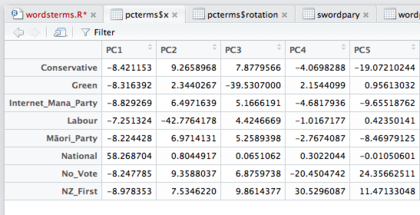

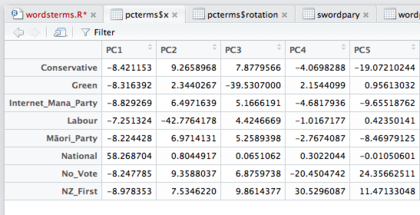

I thought this evening I might run a principle components analysis on the terms frequencies and see what kinds of party clustering it shows up. I think the left/right axis Ben found in the previous election results from the survey generally looks like it is mirrored in the economy/poverty axis (which are inverses of each other except in the middle).

-

A basic PCA of the entire data is essentially useless. PCA1 puts National way off by itself with everyone else clumped, PC2 puts Labour off by itself, PC3 puts the Greens off. My working theory is that as National got the most votes/representation in the survey it has the most unique vocabulary for identifying it as different, but this is a function of sample size (and vocabulary richness I suppose) rather than inherent differences.

I am going to play around with it experimenting/inventing approaches as my hunch is there is something interesting in here.

-

linger, in reply to

Yes, pretty much.

Yule's Q is a dimensionless ratio that varies between -1 and +1:

terms with Q=-1 are associated only with sample B

(a=0, hence (a-b)=-b, (a+b)=b, hence Q = -b/b = -1);

those with Q=+1 only with sample A

(b=0, hence (a-b)=a, (a+b)=a, hence Q= a/a = +1);

Q=0 corresponds to no difference (a=b, hence (a-b) = 0).

Post your response…

This topic is closed.